Stop Holding Your Breath: CT-Informed Gaussian

Splatting for Dynamic Bronchoscopy

Andrea Dunn Beltran1, Daniel Rho1, Aarav Mehta1, Xinqi Xiong1, Raúl San José Estépar2, Ron Alterovitz1, Marc Niethammer3, Roni Sengupta1

1University of North Carolina at Chapel Hill 2Harvard Medical School 3University of California, San Diego

[ Paper ] [ Code coming soon ]

Under Review

Abstract

Bronchoscopic navigation relies on registering endoscopic video to a preoperative CT scan, but respiratory motion deforms the airway by 5–20 mm, creating CT-to-body divergence that limits localization accuracy. In practice, this is mitigated through breath-hold protocols, which attempt to match the intraoperative anatomy to a static CT, but are difficult to reproduce and disrupt clinical workflow. We propose to eliminate the need for breath-hold protocols by leveraging patient-specific respiratory modeling. Paired inhale–exhale CT scans, already acquired for planning, implicitly define the patient-specific deformation space of the breathing airway. By registering these scans, we reduce respiratory motion to a single scalar breathing phase per frame, constraining all reconstructions to anatomically observed configurations. We embed this representation within a mesh-anchored Gaussian splatting framework, where a lightweight estimator infers breathing phase directly from endoscopic RGB, enabling continuous, deformation-aware reconstruction throughout the respiratory cycle without breath-holds or external sensing. To enable quantitative evaluation, we introduce RESPIRE, a physically grounded bronchoscopy simulation pipeline with per-frame ground truth for geometry, pose, breathing phase, and deformation. Experiments on RESPIRE show that our approach achieves geometrically faithful reconstruction, over 20× faster training, and 1.22 mm target localization accuracy within the 3 mm clinically relevant tolerance, outperforming unconstrained single-CT baselines.

RESPIRE Framework

RESPIRE generates realistic bronchoscopic video from paired inspiration/expiration CT scans. The framework provides per-frame RGB images, dense depth, camera pose, breathing phase, and deformation ground truth, enabling controlled evaluation of deformation-aware bronchoscopic reconstruction.

Results

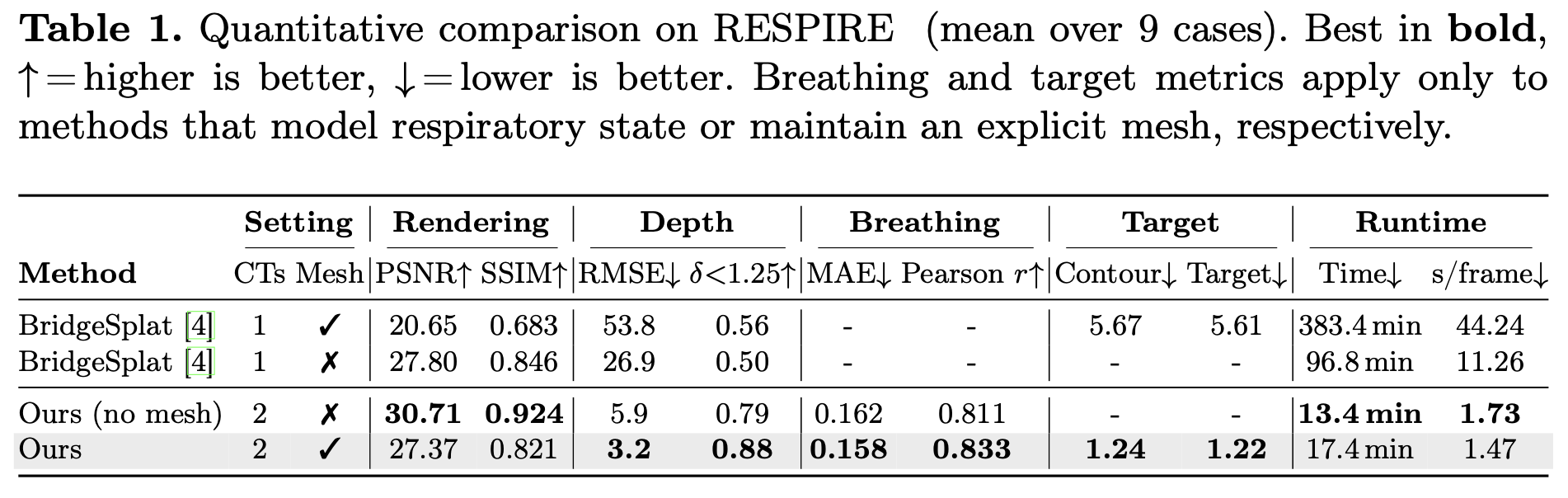

Our method constrains respiratory motion to a patient-specific deformation field derived from paired CT scans, improving geometry and runtime while recovering breathing phase from RGB video.

Constraining deformation to a patient-specific anatomical trajectory removes the appearance–geometry ambiguity that lets unconstrained methods produce plausible RGB while drifting off-surface. Mesh anchoring further forces Gaussians to satisfy the photometric loss through physically consistent motion rather than free drift outside the airway lumen.

Recovering breathing from RGB alone The respiratory cycle is recovered directly from endoscopic video, with no external sensors or breath-hold protocol — the first quantitative evaluation of breathing phase estimation in bronchoscopy. Because the reconstruction adapts continuously to the current anatomical state, it sidesteps the central failure mode of static-CT pipelines: assuming the patient matches a frozen reference.

Clinical implication Target localization lands inside the precision window required for bronchoscopic biopsy. At this level of accuracy, breathing-induced CT-to-body divergence is no longer the bottleneck in navigation, removing the practical motivation for disruptive breath-hold protocols during tissue sampling.

Replacing per-frame unconstrained deformation with a single scalar breathing parameter over a precomputed deformation field collapses the optimization to a low-dimensional subspace, which is what enables the order-of-magnitude training speedup over mesh-deforming baselines.

Acknowledgments

This work is supported by National Institutes of Health projects R21EB035832, “Next-gen 3D Modeling of Endoscopy Videos,” and R21EB037440, “Gen-AI Airway Simulator for 3D Endoscopy.”

BibTeX

Template adapted from the NFL-BA project page.